What is CUDA compute capability?

In this regard, is my card Cuda enabled?

To check if your computer has an NVIDA GPU and if it is CUDA enabled: Right click on the Windows desktop. If you see “NVIDIA Control Panel” or “NVIDIA Display” in the pop up dialogue, the computer has an NVIDIA GPU. Click on “NVIDIA Control Panel” or “NVIDIA Display” in the pop up dialogue.

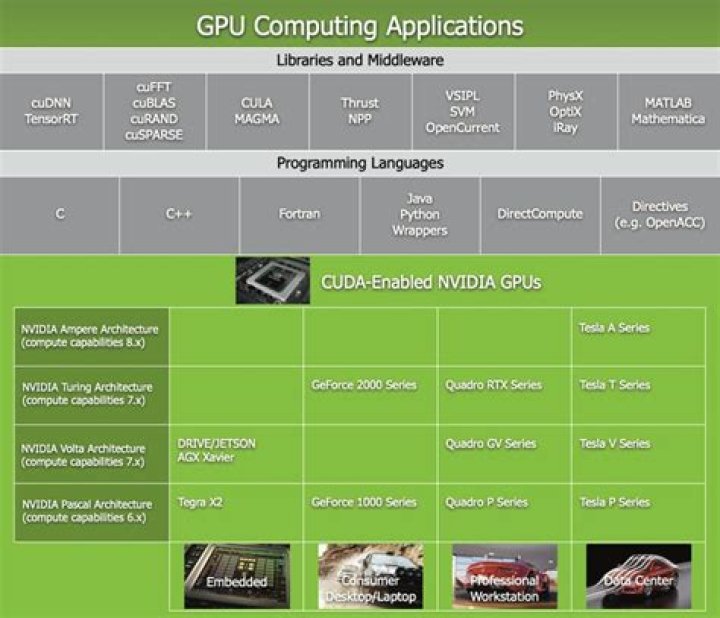

Also Know, what is Cuda code? CUDA (Compute Unified Device Architecture) is a parallel computing platform and application programming interface (API) model created by Nvidia. CUDA-powered GPUs also support programming frameworks such as OpenACC and OpenCL; and HIP by compiling such code to CUDA.

Also asked, what are Cuda cores used for?

CUDA Cores are parallel processors, just like your CPU might be a dual- or quad-core device, nVidia GPUs host several hundred or thousand cores. The cores are responsible for processing all the data that is fed into and out of the GPU, performing game graphics calculations that are resolved visually to the end-user.

How many CUDA cores do I need?

A common gaming CPU has anywhere between 2 and 16 cores, but CUDA cores number in the hundreds, even in the lowliest of modern Nvidia GPUs. Meanwhile, high-end cards now have thousands of them.

Related Question Answers

What is the difference between Cuda and Cuda Toolkit?

What's the difference between Nvidia CUDA toolkit and CUDA? CUDA is a library used by many other programs like TensorFlow or OpenCV. CUDA toolkit is an extra set software on top of CUDA which makes GPU programming with CUDA easy. For instance Nsight as the debugger (in Visual Studio).How do I know if Cuda is working?

Verify CUDA Installation- Verify driver version by looking at: /proc/driver/nvidia/version :

- Verify the CUDA Toolkit version.

- Verify running CUDA GPU jobs by compiling the samples and executing the deviceQuery or bandwidthTest programs.

Why do I need Cuda?

CUDA is a development toolchain for creating programs that can run on nVidia GPUs, as well as an API for controlling such programs from the CPU. The benefits of GPU programming vs. CPU programming is that for some highly parallelizable problems, you can gain massive speedups (about two orders of magnitude faster).How do you program Cuda?

Given the heterogeneous nature of the CUDA programming model, a typical sequence of operations for a CUDA C program is:- Declare and allocate host and device memory.

- Initialize host data.

- Transfer data from the host to the device.

- Execute one or more kernels.

- Transfer results from the device to the host.

Is GTX 1650 Cuda enabled?

Every GPU produced by NVIDIA since about 2008 is CUDA enabled. Every GPU produced by NVIDIA since about 2008 is CUDA enabled. While trying to run gromacs on my laptop, the software states that my GTX 1650 is not enabled for computing. `nvidia-smi` shows the graphics card and the drivers are installed.Is Cuda C or C++?

Not realized by many, CUDA is actually two new programming languages, both derived from C++. One is for writing code that runs on GPUs and is a subset of C++. Its function is similar to HLSL (DirectX) or Cg (OpenGL) but with more features and compatibility with C++.Is Cuda still used?

CUDA is a closed Nvidia framework, it's not supported in as many applications as OpenCL (support is still wide, however), but where it is integrated top quality Nvidia support ensures unparalleled performance.What graphics cards support CUDA?

1 Answer. CUDA works with all Nvidia GPUs from the G8x series onwards, including GeForce, Quadro and the Tesla line. CUDA is compatible with most standard operating systems.Does more CUDA cores mean better?

more cores is better when algorithm scaling is good. more cores mean more registers so less dependency to memory. more cores mean you need more CUDA threads to use all of them efficiently. This makes some lightweight works to have less than linear scaling between different sized GPUs.What CUDA stands for?

CUDA stands for Compute Unified Device Architecture, and is an extension of the C programming language and was created by nVidia. Using CUDA allows the programmer to take advantage of the massive parallel computing power of an nVidia graphics card in order to do general purpose computation.How many CUDA cores does RTX 2080 TI have?

4,352 CUDA coresHow important are Cuda cores?

All of these cores help the CPU handle data—the more cores, the faster the CPU processes. CUDA cores work the same way that CPU cores do (except they're found inside GPUs). Since CUDA cores are much smaller than CPU cores, you can fit more of them inside of a GPU.Are Cuda cores physical?

CUDA (Compute Unified Device Architecture) is mainly a parallel computing platform and application programming interface (API) model by Nvidia. It accesses the GPU hardware instruction set and other parallel computing elements. The physical individual cores inside the GPU that execute CUDA API are known as CUDA Cores.How many cores does a GPU have?

A CPU consists of four to eight CPU cores, while the GPU consists of hundreds of smaller cores. Together, they operate to crunch through the data in the application. This massively parallel architecture is what gives the GPU its high compute performance.Can I use Cuda without Nvidia GPU?

You should be able to compile it on a computer that doesn't have an NVIDIA GPU. However, the latest CUDA 5.5 installer will bark at you and refuse to install if you don't have a CUDA compatible graphics card installed.Which GPU has the most cores?

NVIDIA TITAN V has the power of 12 GB HBM2 memory and 640 Tensor Cores, delivering 110 teraflops of performance.GROUNDBREAKING CAPABILITY.

| NVIDIA TITAN V | |

|---|---|

| Tensor Cores | 640 |

| CUDA Cores | 5120 |